Chapter 5.1 – What Is Generative AI

What generative AI actually is, how it works, and why it feels different from everything that came before — from an engineer’s perspective.

What Is Generative AI?

When I first heard generative AI, I assumed it was just another version of machine learning — maybe better autocomplete, maybe nicer chatbots. I’d already seen plenty of “AI assistants” that couldn’t answer basic questions or wrote unusable code.

So I didn’t expect much.

What surprised me wasn’t that these systems could generate text. It was how they behaved once they got good enough.

- They didn’t just answer questions.

- They continued conversations.

- They adapted tone.

- They explained trade-offs.

- They rewrote things based on feedback.

Not because they understand — but because the patterns they’ve learned are rich enough to look like understanding.

Classic ML systems answer questions. Generative models continue conversations.

That difference is what generative AI actually is.

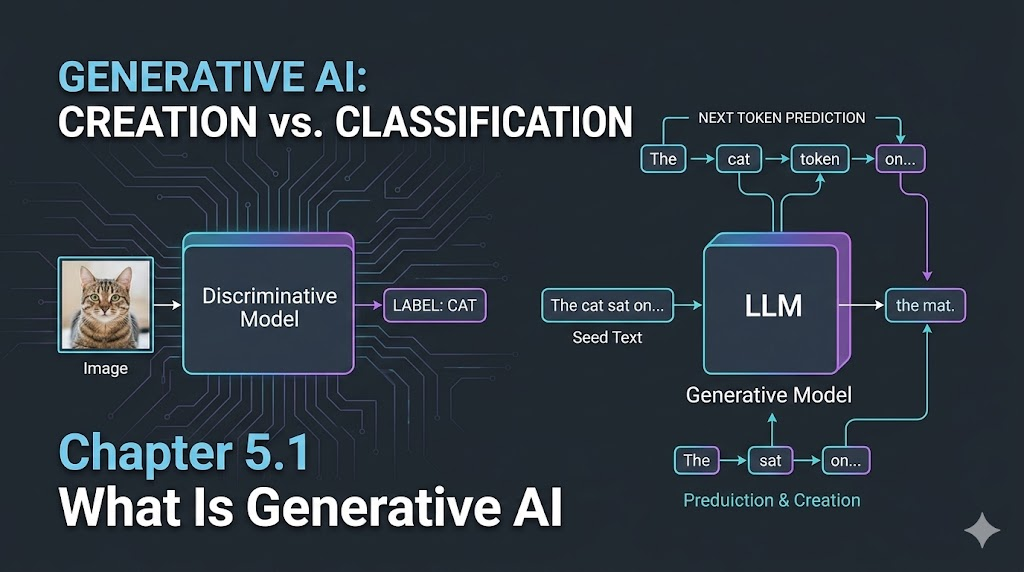

What “Generative” Really Means

Most ML systems we’ve worked with answer questions like:

- “Is this deployment risky?”

- “Is this log line an error?”

- “Which category does this ticket belong to?”

They choose between existing labels.

Generative models do something different:

- “Write a deployment plan.”

- “Summarize this incident.”

- “Explain this Terraform error.”

- “Draft an email to the SRE team.”

They create new content instead of picking from a fixed set.

That shift sounds subtle, but it changes how you use the system. You stop asking for answers and start asking for drafts, ideas, explanations, and starting points.

Engineer’s reflection: Discriminative models classify. Generative models collaborate.

Practitioner’s Tip: Don’t treat generative AI like a search engine. Treat it like a creative partner—one that drafts, rewrites, and helps you think faster.

The Moment It Clicked for Me

Realization: The first time generative AI solved a real engineering problem for me, I stopped seeing it as hype and started seeing it as a tool I could trust (with review).

I didn’t become convinced by demos. I became convinced the first time it helped me with real work.

I pasted a messy Terraform error and got:

- A breakdown of what failed

- Why it failed

- A likely fix

- A safer alternative

Then I pasted a 1000-line log dump and asked: “Summarize what happened.”

It gave me a readable incident narrative.

Then I asked it to rewrite a customer email in a calmer tone — and it nailed it.

None of these were perfect. But they were 80% right, instantly. And that was enough to change my workflow.

Instead of starting from blank pages, I started from drafts.

Instead of writing everything, I started editing.

That’s when I realized this wasn’t automation. It was something else.

How Generative AI Actually Works (Without the Mysticism)

Under the Hood: At its core, models like GPT are trained to do one thing: predict the next token. No magic, no reasoning—just pattern continuation at scale.

Under the hood, models like GPT are trained to do one thing:

Predict the next token.

A token might be:

- A word

- Part of a word

- A symbol

- A punctuation mark

During training:

- The model sees massive amounts of real text

- It learns statistical patterns of what tends to come next

During usage:

- You give it a prompt

- It predicts the next token

- Then the next

- Then the next

- Until it decides it’s done

That’s it.

No reasoning engine.

No knowledge database.

No symbolic logic.

Just pattern continuation at scale.

flowchart LR

Input[Prompt / Context] --> Model[Generative Model]

Model --> Output[Generated Text]

style Input fill:#e3f2fd,stroke:#1976d2,stroke-width:2px

style Model fill:#fff3e0,stroke:#f57c00,stroke-width:2px

style Output fill:#e8f5e9,stroke:#388e3c,stroke-width:2px

Infra Analogy: Like generating a config file line by line—each line depends on what’s already written, and you stop when the file looks complete.

Why This Feels So Different from Previous AI

What makes this powerful isn’t the algorithm. It’s:

- Scale

- Data

- Architecture (transformers)

- Training compute

That combination is what turns “next token prediction” into something that feels like conversation.

Earlier ML systems felt like tools. Generative models feel like collaborators.

Not because they’re smarter—but because:

- They accept vague input

- They produce structured output

- They adapt to feedback

- They handle ambiguity well

That’s rare in software. Most systems need:

- Precise inputs

- Fixed schemas

- Known workflows

Generative AI tolerates messiness—logs, half-written thoughts, unclear requirements—and still produces something usable.

For engineers, that’s a big shift.

Where This Shows Up in Real Engineering Work

In my day-to-day, generative AI shows up less as “AI features” and more as workflow accelerators:

- Drafting migration plans

- Summarizing incidents

- Writing runbooks

- Explaining unfamiliar code

- Generating Terraform skeletons

- Rewriting customer communications

None of this replaces engineering judgment. But it compresses time between idea → draft → usable output.

Engineer’s Insight: The value isn’t correctness—it’s momentum. Use LLMs to get moving, not to get perfect answers.

Generative vs Discriminative (Why This Matters)

Quick Compare: Discriminative models answer; generative models create. This shift changes how we work with AI.

Most ML you’ve seen is probably discriminative:

| Discriminative ML | Generative AI |

|---|---|

| “Is this risky?” | “Write a risk analysis.” |

| “Spam or not?” | “Draft a reply.” |

| “Which category?” | “Explain the issue.” |

Discriminative models answer closed questions. Generative models open new ones.

That’s why generative AI feels like a platform shift, not just a model upgrade.

What Generative AI Is Not

Some things I had to unlearn:

- It doesn’t “understand”

- It doesn’t “reason” like humans

- It doesn’t “know facts”

- It doesn’t guarantee correctness

- It predicts what sounds right, not what is right.

Which means:

- You always verify

- You treat outputs as drafts

- You stay in the loop

Engineer’s Warning: Treat LLMs like junior engineers with infinite patience and zero accountability. Review everything before you trust it.

What I Wish I’d Known Earlier

Takeaway:

- Generative AI creates, not classifies

- It works by predicting what comes next—at scale

- Its real value is accelerating thinking, not replacing it

- The human-in-the-loop model isn’t optional—it’s the design

- This isn’t automation—it’s collaboration with probabilistic systems

What’s Next?

➡ Series 5 – Chapter 5.2: How LLMs Are Trained (High Level)

Next, we’ll look at:

- Where training data comes from

- What “pre-training” actually means

- Why fine-tuning and RLHF exist

- How training shapes model behavior

Architectural Question: How would you design a workflow or system to ensure that generative AI outputs are always reviewed and improved by humans before being used in production?

If Chapter 5.1 was about what generative AI feels like, Chapter 5.2 is about what’s actually happening under the hood.